What if you have a limited speed internet connection and still want to make certain sites available offline? In this article, we are going to see a trick that will allow you to visit a web page offline using HTTrack.

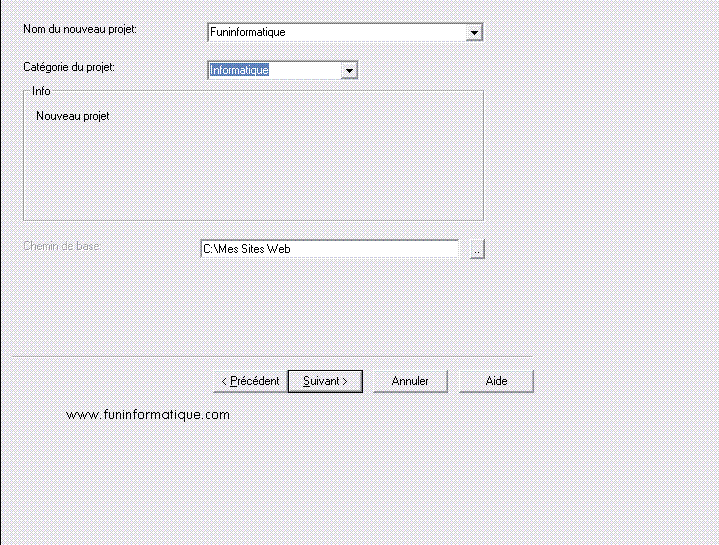

HTTrack, c'is a website vacuum cleaner. It allows you to download a website to your hard drive, recursively building all the directories, retrieving HTML, images and files from the server to your computer. After downloading and installing HTTrack . Launch HTTrack, and give your project a name and choose its category.

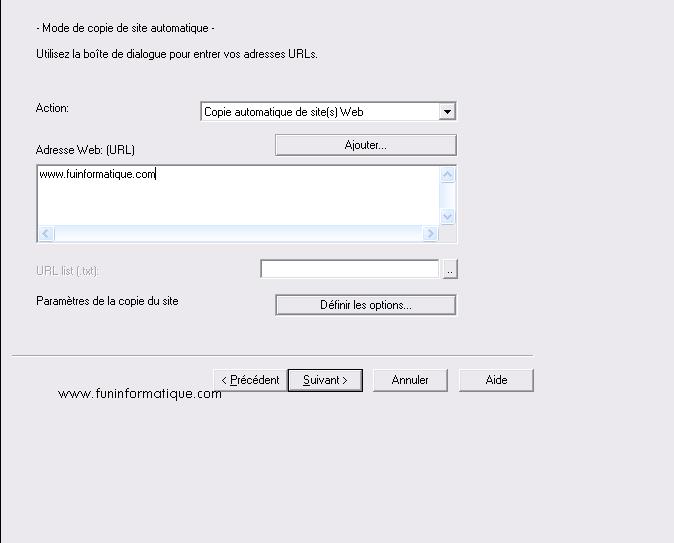

In the field Web address: (URL), enter the address of the site you want to download.

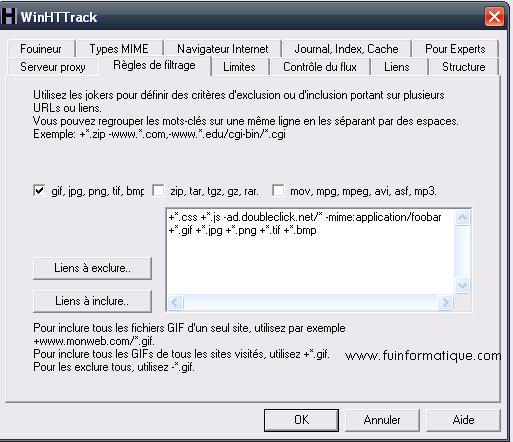

Then click on the “Set options” button, In the tab Filtering rules, Check the box gif, jpg, png, tif and bmp to download the images of the brought back pages. Check the appropriate boxes for downloading music, animations, etc.

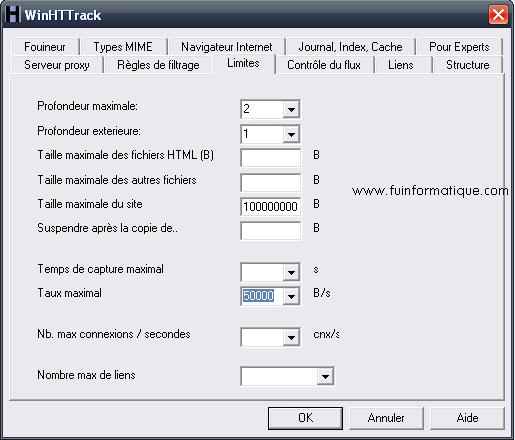

Then open the tab limitations. You can now set the maximum depth level you will download in the page tree you specified. Level 1 corresponds to the home page, 2 to the home page and all the links it contains, etc.

Then specify the download level for links that point to pages outside the original site,1 for example. If you are limited in size, you can set a maximum site size in bytes, 100000000 for 100 MB for example. Expand the list Maximum rate and choose the option 50 000 to increase the download speed of pages and images. Then open the tab Links. Check the box Download HTML first. All web pages will thus be downloaded before the images.

The capture then begins. Be careful, the operation can be quite long: all the links are analyzed, the images and pages downloaded and the site architecture recreated on your hard drive. Once vacuuming is complete, click the button Explore the copy of the site.

You can then browse the site, offline, directly from the downloaded files.

Need help ? Ask your question, FunInformatique will answer you.